The Voice That Betrays You: How a Few Seconds of Your Life Becomes a Criminal’s Weapon

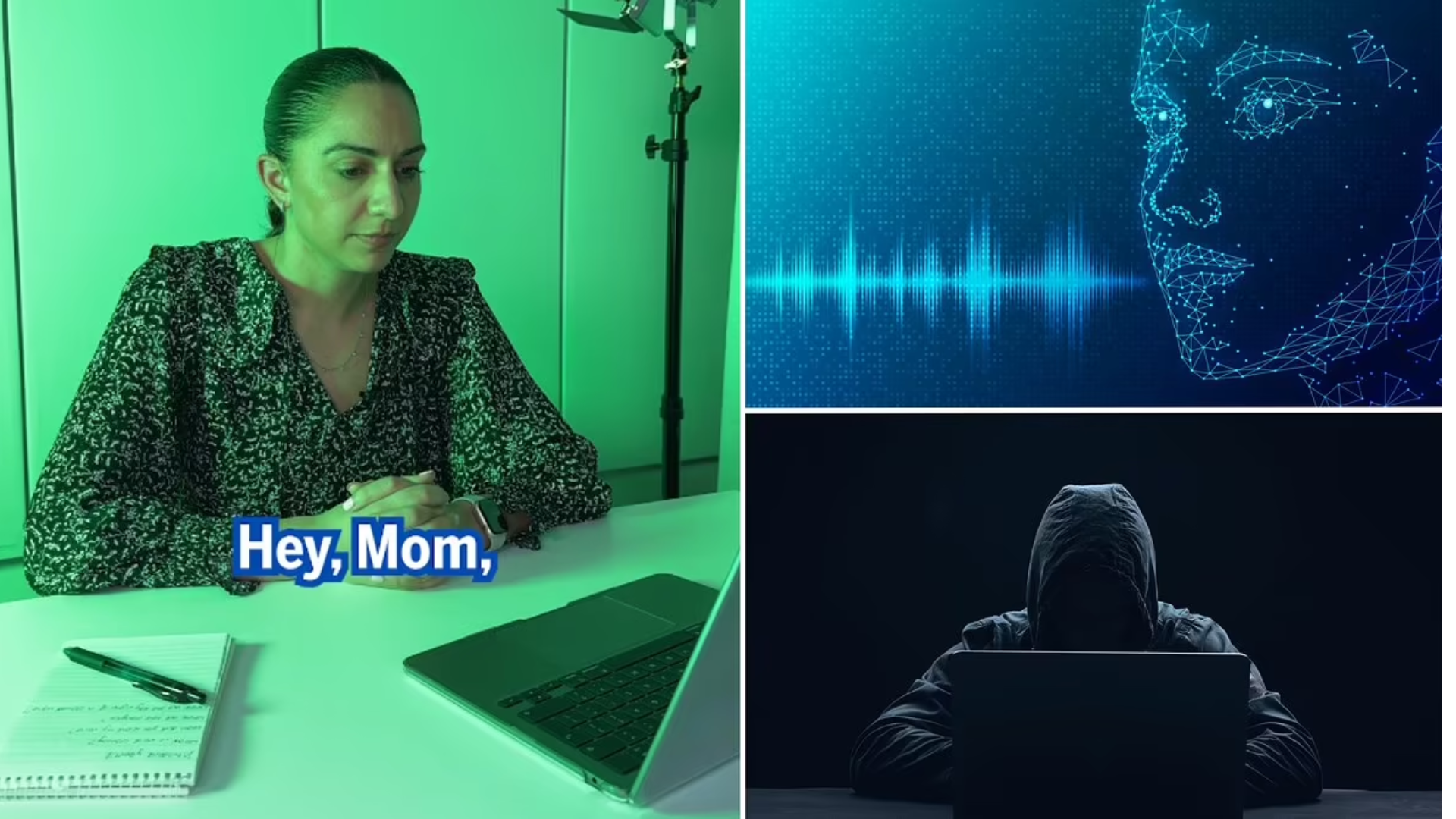

It starts with something you’d never think to hide: the sound of your own joy. A video from your birthday where you laugh and say, “Thank you, guys!” A podcast interview where you talk about your work. The voicemail greeting your mom has saved for a decade. In our shared digital life, we’ve left thousands of these vocal footprints—tiny, innocent recordings of our most human moments. Now, criminals are picking them up, feeding them into a machine, and weaponizing them against the people who love and trust us most. How criminals use deepfake voice cloning to scam people is a masterclass in modern psychological warfare, leveraging our deepest biological instincts—to protect our children, obey authority, and rescue loved ones—against us. This isn’t a complex hack; it’s a simple, brutal con made possible by artificial intelligence that can now replicate the one thing we thought was uniquely ours: our voice.

At TrueKnowledge Zone, we’ve spoken to victims whose worlds were shattered not just by financial loss, but by the violation of hearing a perfect ghost of their child beg for help. This scam works because it doesn’t feel like a scam. It feels like a crisis, and in a crisis, we don’t think—we react. Let’s pull back the curtain on this cruel process, from the casual moment you post online to the heart-stopping phone call that empties a bank account.

The Harvest: Scraping Your Digital Vocal Print

The first step requires no interaction with the victim. It’s a quiet, automated search for raw material.

Mining Public Content for “Clean Audio”

Criminals use bots to scour the internet for target-rich audio sources. The ideal clip is clear of background music or noise, with the target speaking in an emotional, expressive tone. Favorite hunting grounds include:

-

Social Media Videos: TikTok, Instagram Reels, Facebook birthday tribute videos.

-

Professional Profiles: LinkedIn presentation videos, conference talks posted on YouTube.

-

Public Appearances: Local news interviews, community podcast appearances.

-

Legacy Content: Old family videos uploaded to cloud storage or sharing sites.

A criminal doesn’t need hours. A single, clean sentence—like “I’m so happy to be here!”—can be enough for many AI cloning platforms to build a foundational voice model.

The Dark Web Marketplace for “Voice Kits”

For scammers lacking the skill to scrape, there’s a marketplace. On encrypted forums, you can buy “voice kits”—pre-cloned, high-quality digital models of common “personas.” Need the voice of a generic “American teen girl,” a “concerned middle-aged father,” or a “authoritative male executive”? For a fee, these cloned voice profiles can be downloaded and fed into a text-to-speech engine, ready to say anything the scammer types. This commoditization has turned voice cloning from a niche skill into a point-and-click crime.

The Insider Threat: The Shortest Path to Perfection

In targeted business scams, the criminal’s goal is often a specific executive. If public audio is scarce, they may turn to simpler methods: phone call recording. A scammer might pose as a journalist, recruiter, or vendor to get a 10-minute call with the target, asking open-ended questions to capture a wide range of vocal emotions. This recording becomes a goldmine for creating a convincing, real-time clone.

The Arsenal: Tools That Turn Clones into Weapons

The cloning itself happens on platforms that range from disturbingly accessible to frighteningly sophisticated.

Consumer-Grade AI Voice Platforms

Websites like ElevenLabs, Murf, and Play.ht offer public voice cloning features. While they have ethical policies, these safeguards are easily bypassed. A scammer can upload a short audio sample, type any script, and generate an MP3 file of “you” saying those words in minutes. The cost is minimal, often covered by a free trial or a small subscription.

Open-Source AI Models and “FraudGPT” Variants

For more advanced criminals, open-source AI models available on code repositories like GitHub can be fine-tuned for voice cloning with no oversight. Furthermore, malicious versions of large language models, dubbed “FraudGPT” or “WormGPT,” are sold on the dark web. These are explicitly trained to help with cybercrime and can include integrated, no-holds-barred voice cloning modules, along with scripts for social engineering.

Real-Time Voice Conversion (RTC) Software

This is the most dangerous evolution. RTC software doesn’t just generate a static audio file; it can modify a scammer’s own voice in real-time during a phone call. As the criminal speaks into their microphone, the software alters their vocal characteristics to match the cloned target voice with a delay of just milliseconds. This allows for dynamic, two-way conversation, enabling the scammer to respond to a victim’s questions and escalate panic convincingly.

The Con: The Scripted Crisis That Hijacks Your Brain

With the cloned voice ready, the criminal deploys a psychological playbook perfected over centuries, now supercharged by AI authenticity.

The Family Emergency Scam (“The Panic Play”)

This is the most common and devastating. The scammer uses the cloned voice of a teen or young adult.

-

The Hook: A call to a parent, often from a spoofed number. The cloned voice is sobbing, hysterical. “Mom/Dad, I’m in jail! I was in a car accident!” Background noise of yelling may be added.

-

The Twist: A “police officer” or “lawyer” (another scammer) gets on the line. They confirm the “crisis,” emphasize urgency and secrecy (“Don’t tell anyone, it will make it worse”), and demand immediate payment for “bail” or “hospital fees,” usually via untraceable methods like wire transfer, cryptocurrency, or gift cards.

-

Why It Works: It triggers a primal, biological panic response (amygdala hijack). The parent’s brain is flooded with stress hormones, shutting down logical reasoning. The perfect voice short-circuits disbelief.

The Business Executive Scam (“The Authority Play”)

This targets employees, often in finance or accounting departments.

-

The Hook: A call or voicemail from a cloned executive (CFO, CEO). The tone is urgent, slightly irritated, and busy. “This is [Name]. I need you to process a confidential wire transfer immediately for an acquisition. I’m in back-to-back meetings, text me when it’s done.”

-

The Follow-Up: Spoofed emails with wiring details arrive, seemingly from the executive. If the employee hesitates or asks a verifying question, the scammer uses the real-time clone or a pre-generated response to apply pressure (“This is time-sensitive. Do I need to get someone else?”).

-

Why It Works: It exploits hierarchy, fear of disobeying authority, and a desire to be a proactive team player. The employee’s instinct is to solve the boss’s problem, not question their identity.

The Grandparent Scam 2.0 (“The Trust Play”)

A classic scam, now with horrifying authenticity.

-

The Hook: An elderly person receives a call from their “grandchild.” The cloned voice is calm but scared. “Grandpa, I’m in trouble. I need help, but please don’t tell my mom.”

-

The Build: The scammer spins a tale of a minor legal issue, car repair, or medical bill. They ask for money to be sent via cashier’s check, money transfer (MoneyGram, Western Union), or gift cards read over the phone.

-

Why It Works: It preys on the deep trust and protective instinct grandparents have, combined with a potential generational gap in understanding the technology. The familiar voice disarms all suspicion.

Case Study: “Mark’s” Bad Day – A Business Scam in Action

David, an accounts payable clerk at a mid-sized manufacturing firm, received a voicemail at 8:05 AM. It was his CEO, Mark. “David, Mark here. Critical vendor payment needed today. I’ll email details. I’m in flight, call my cell if any issues.” The voice was unmistakable—Mark’s slight Boston accent, his brisk pace.

An email from “Mark’s” address arrived minutes later with wiring instructions for $187,000 to a new “equipment vendor.” David processed the paperwork but hit a snag requiring a second authorization. He called the cell number in the email.

“Mark” answered, sounding harried. “David, what is it? I’m about to board a connection.” David explained the hiccup. “For God’s sake, just approve it. This deal is falling apart as we speak!” the voice snapped. David, not wanting to be the reason a deal failed, overrode the protocol.

The real Mark was not in an airport. He was at his desk. The cloned voice was created from six months of weekly “All-Hands” update videos Mark posted internally—videos an IT contractor had accessed and sold.

The Human Toll: Beyond the Financial Loss

While the financial damage is immense (the FBI reports losses in the billions), the psychological scar is deeper.

-

Shattered Trust: Victims report a lasting paranoia. The sound of a loved one’s voice, once a comfort, can now trigger anxiety.

-

Profound Shame: The feeling of being “so stupid” to fall for it is crushing, despite the scam being engineered to bypass logic.

-

Relational Strain: Families and workplaces can experience blame and mistrust in the aftermath.

Your Defense: Building a “Verification Imperative”

In this new reality, your ears are no longer reliable witnesses. You must build new habits.

1. Establish a Family Password or Challenge Question.

This is your digital safe word. It must be obscure (“What was the name of our first pet hamster?”). Any urgent call without it is an automatic red flag.

2. Hang Up and Initiate Your Own Call.

The single most effective action. If you get a distress call, say nothing of value, hang up immediately, and call the person back on a known, trusted number from your own contacts. If they don’t pick up, call other family or friends to locate them. A real person in crisis will be reachable; a scammer’s story will fall apart.

3. Implement Business Process Controls.

-

Dual-Verification Mandate: Any payment instruction must be verified by a second, known authority via a separate channel (e.g., phone call to a known number).

-

Slowing Down Urgency: Mandate a cooling-off period for all new vendor payments or changes to payment info. Urgency is the scammer’s oxygen.

-

Employee Training: Drill the “Hang Up and Call Back” protocol. Use simulated deepfake vishing attacks in security training.

4. Lock Down Your Digital Footprint.

Audit your social media. Make profiles private. Remove public videos with vocal audio. Ask family members to do the same. Your voice is personal data; treat it that way.

Criminals using deepfake voice cloning have found the perfect blend of high-tech deception and low-tech emotional manipulation. They aren’t breaking into vaults; they’re walking in through the front door we opened with our own shared memories. The defense is not technological, but behavioral. It’s a simple, disciplined pause. It’s the courage to hang up on your “sobbing child” to make a second call. That moment of verification is the modern moat around your castle. In the age of the perfect fake, your greatest shield is your own, deliberate skepticism.

10 Frequently Asked Questions (FAQs)

1. How long does it take to clone a voice?

With modern AI tools, a basic, usable clone can be generated in under two minutes from a clean audio sample. A more robust, emotionally versatile clone for dynamic scams might take 10-15 minutes of processing time. The speed makes this crime highly scalable.

2. Can they clone a voice from a regular phone call?

Yes. While landline quality is poorer, the audio from a standard cellular or VoIP call (like WhatsApp, FaceTime Audio) is sufficient for most cloning algorithms. Scammers often record these calls explicitly to harvest voice samples for future, more targeted attacks.

3. I think I was targeted but didn’t lose money. What should I do?

Report it. File a report with the FBI’s Internet Crime Complaint Center (IC3.gov) and your local police. Your report adds crucial data on scammer tactics, phone numbers, and scripts, helping law enforcement connect dots and disrupt networks.

4. Are there any verbal tics or signs that can reveal a deepfake voice?

Sometimes. Listen for: unnaturally even cadence (like a news anchor), slight digital artifacts or robotic tones on certain syllables, lack of mouth sounds (lip smacks, breaths), and an inability to naturally laugh, cough, or sigh on command. However, do not rely on this. The technology is improving fast. Always verify.

5. Who is most vulnerable to these scams?

While everyone is a target, highest-risk groups are: Parents of teenagers/college students (high social media audio footprint), Elderly individuals (strong trust in voice, less tech familiarity), Executive Assistants & Finance Staff (conditioned to act on authority), and Immigrants who may fear involving authorities due to the scammer’s threats involving “ICE” or “police.”

6. If I wire money, can my bank get it back?

Extremely unlikely. Wire transfers, especially international ones, are often instantaneous and final. Cryptocurrency and gift card payments are completely irreversible. Banks view this as “authorized” fraud because you instructed the transfer. Recovery rates are in the low single digits.

7. Is voice recognition security (for banking apps, etc.) still safe?

It is now a significant vulnerability. If a scammer has a high-quality clone of your voice, they could potentially bypass voice-based authentication systems. Any service relying solely on voice recognition should be considered insecure. Enable multi-factor authentication (MFA) using an authenticator app or hardware key instead.

8. What if the scammer threatens violence if I hang up or don’t pay?

This is a universal bluff designed to prevent verification. Hang up immediately. Then, call your loved one directly or contact other family/friends to confirm their safety. The scammer’s power exists only on that phone line. Once you break contact, their threat evaporates.

9. What are tech companies or lawmakers doing about this?

Tech: Some AI voice platforms are implementing audio watermarking and stricter ethics checks, but these are easily bypassed. Detection tools are in development but lag behind creation tools.

Law: A few states have passed laws against malicious deepfakes, and federal bills are proposed, but enforcement across jurisdictions is a nightmare. The legal framework is struggling to keep pace.

10. What’s the one thing I should tell my family today?

Have this conversation: “If I, or anyone claiming to be me, ever calls you in a panic asking for money, it is a scam. Hang up. Then call me directly on my usual number to check. We need a family password. Let’s choose one right now.” This 60-second talk could save your life’s savings.

Leave a Reply