In 2026, AI voice cloning has become both a marvel and a menace. The ability to replicate human voices with alarming accuracy opens doors for creativity, entertainment, and accessibility. But it also empowers scammers to commit fraud in unprecedented ways. AI Voice Cloning Fraud: Real Cases and Prevention explores how criminals exploit this technology and practical steps to protect yourself.

At TrueKnowledge Zone, we understand that hearing a familiar voice can build trust—but scammers now exploit that instinct. Everyday people, professionals, and organizations are vulnerable to AI-generated voice fraud, making awareness, prevention, and verification essential.

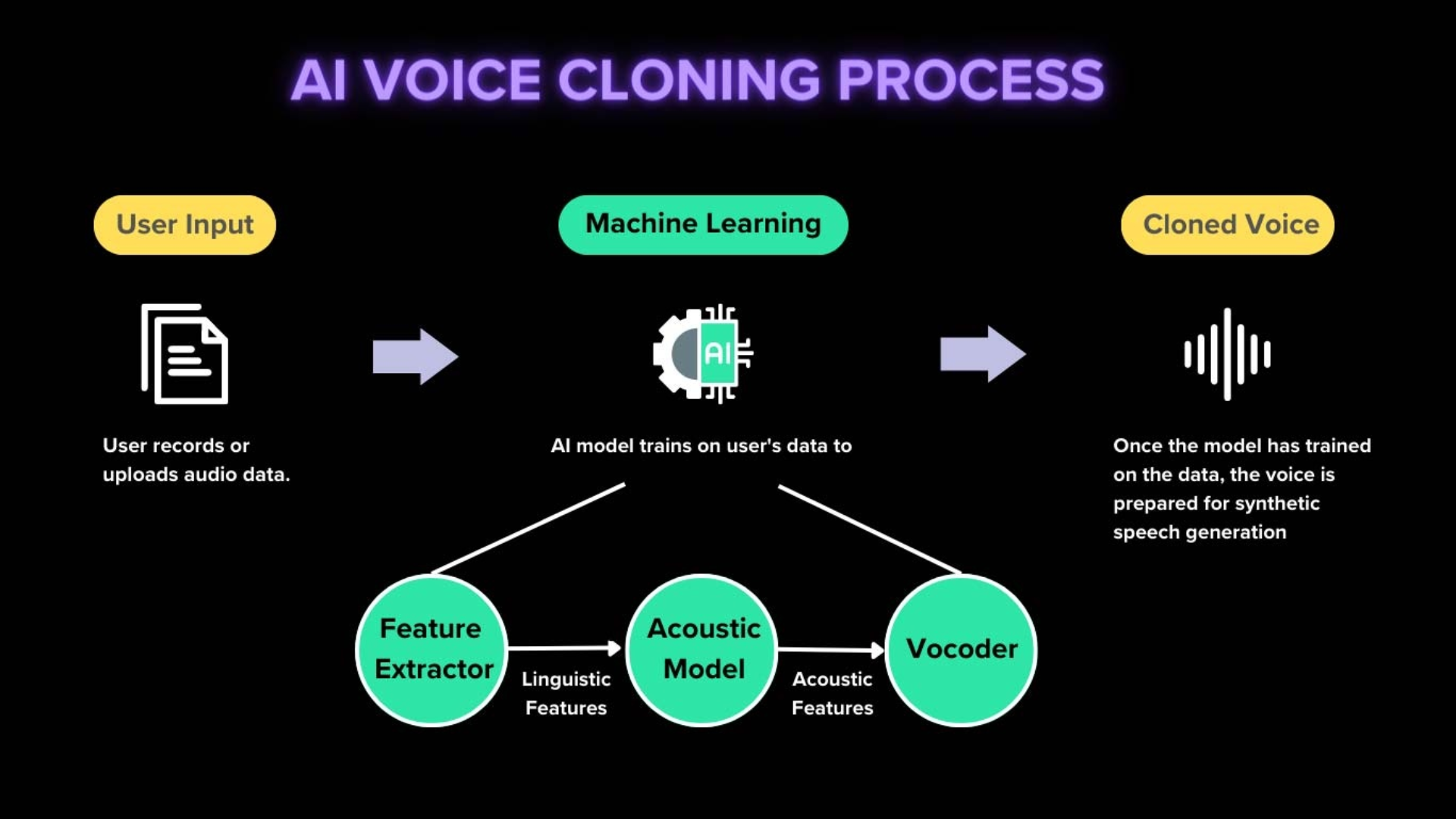

How AI Voice Cloning Works

Deep Learning Models

AI voice cloning uses deep learning to analyze speech patterns, tone, and rhythm, creating near-perfect replicas.

Minimal Audio Requirements

Even a few seconds of recorded speech can be enough to generate convincing clones.

Real-Life Example: Fraudulent Calls

Criminals used a short recording of a CEO to instruct employees to transfer funds, leading to significant financial losses.

Common Voice Cloning Scams

Executive Impersonation

Scammers imitate executives’ voices to authorize payments or access sensitive information.

Personal Relationship Exploitation

Fraudsters clone voices of loved ones to manipulate emotions and request money.

Case Study: Employee Scam

A European company lost over $200,000 after an employee followed instructions from a cloned voice call.

Social Engineering Techniques

Trust Exploitation

Humans naturally trust familiar voices, making them vulnerable to requests without verification.

Urgency and Pressure

Scammers often create time-sensitive scenarios to force hasty decisions.

Real-Life Example: Romance Scams

Victims sent money to AI-cloned voices impersonating partners, believing the requests were genuine.

AI Voice Fraud in Financial Institutions

Bank Impersonation

Voice cloning is used to bypass security checks, authorize transfers, or access accounts.

Automated Scam Calls

AI-generated calls can interact naturally, increasing the likelihood of victim compliance.

Case Study: Bank Security Breach

A bank detected multiple deepfake voice attempts, prompting a system-wide update to multi-factor authentication.

Detection Challenges

Convincing Realism

Advanced AI can mimic speech idiosyncrasies, emotions, and accents, making detection difficult.

Lack of Awareness

Employees or individuals unaware of voice cloning may act on fraudulent calls.

Practical Tip: Verify Independently

Always confirm requests through a secondary channel before acting on a voice request.

Preventive Measures for Individuals

Multi-Factor Verification

Use calls, emails, or secure apps to verify sensitive requests.

Educate Your Network

Share knowledge about AI voice fraud with family, friends, and colleagues.

Real-Life Example: Avoiding Scams

Individuals who confirm requests via alternate channels successfully avoided transferring funds to scammers.

Corporate Prevention Strategies

Employee Training

Regular workshops educate staff about recognizing AI-generated voices and social engineering tactics.

Internal Verification Protocols

Companies implement verification steps for financial or sensitive communications.

Case Study: Corporate Defense

Firms with proactive AI fraud policies report fewer incidents and faster response times.

Technology Solutions

AI Detection Tools

Emerging software analyzes voice patterns to detect anomalies indicative of cloning.

Blockchain Verification

Some organizations experiment with blockchain to verify authentic communications and prevent voice fraud.

Real-Life Example: Secured Transactions

Using AI-assisted verification tools, banks detected and blocked cloned voice fraud attempts in real-time.

Legal and Regulatory Actions

Anti-Fraud Legislation

Governments worldwide are introducing laws to penalize malicious voice cloning.

Reporting Mechanisms

Mandatory reporting of AI voice fraud increases accountability and prevents future crimes.

Real-Life Example: Regulatory Success

Several cases led to arrests and fines, demonstrating the importance of legal frameworks in protecting the public.

Preparing for the Future

Continuous Awareness

AI technology will evolve, and ongoing education is crucial for individuals and organizations.

Combining Technology with Human Oversight

AI detection tools work best when paired with human vigilance, especially for high-risk communications.

Case Study: Holistic Defense

Companies integrating AI detection, employee training, and strict verification protocols reduced voice fraud incidents by 70%.

Practical Tips for Protection

-

Always Verify Requests: Never act on voice messages without confirmation.

-

Enable Multi-Factor Authentication: Protect accounts and transactions.

-

Educate Yourself and Others: Awareness is the first defense.

-

Use Trusted Detection Tools: AI-assisted verification can detect cloned voices.

-

Report Suspicious Activity: Notify authorities or organizations immediately.

Frequently Asked Questions

1. What is AI voice cloning?

AI voice cloning uses machine learning to replicate a person’s voice, creating realistic audio for various applications.

2. How do scammers use it?

Scammers impersonate executives, loved ones, or trusted contacts to commit fraud, manipulate, or steal information.

3. Can AI voice fraud be detected?

Yes, with AI detection tools and careful verification, though highly advanced clones may be challenging to spot.

4. Are social media platforms vulnerable?

Yes, scammers can share cloned voices via calls, messages, or voice notes, bypassing platform protections.

5. How can individuals protect themselves?

Use multi-factor verification, confirm requests through alternate channels, and remain vigilant.

6. What should companies do?

Train employees, implement verification protocols, and deploy AI detection systems to prevent fraud.

7. Is voice cloning illegal?

Yes, using AI voice cloning for fraud or deception is criminal in most jurisdictions.

8. Can AI help prevent scams?

Yes, AI tools analyze audio patterns, detect anomalies, and alert users to potential fraud.

9. Are small businesses at risk?

Yes, any organization handling sensitive data, finances, or communications can be targeted.

10. Where can I learn more?

Trusted resources like TrueKnowledge Zone, cybersecurity blogs, and government advisories provide up-to-date guidance.

Conclusion and Gentle CTA

AI Voice Cloning Fraud: Real Cases and Prevention shows that technology can empower, but also deceive. Awareness, verification, and the use of AI detection tools are critical to protecting individuals and organizations.

Stay vigilant, educate your network, and adopt security protocols to safeguard your finances, identity, and reputation. In a world where voices can be cloned, seeing—or hearing—is not always believing.

Leave a Reply