The Unseen Battlefield: How Artificial Intelligence is Redefining the Very Nature of Cyber Warfare

For decades, cyber warfare was a shadowy arena of human hackers, painstaking code, and sporadic digital skirmishes. The front lines were server rooms and firewalls; the weapons, lines of malicious code; the soldiers, individuals with specialized skills. Today, a seismic and silent shift is occurring. The battlefield is no longer just in servers, but in algorithms. The soldiers are no longer just humans, but autonomous digital agents. The tempo is no longer sporadic, but continuous and machine-speed. How artificial intelligence is changing cyber warfare is not a speculative question—it is the defining reality of 21st-century conflict. We have moved from an era of cyber tactics to an era of AI-driven cyber strategy, where the ability to learn, adapt, and act at scale is becoming the ultimate determinant of power. This transformation is reshaping doctrines, redrawing red lines, and creating a new, unstable paradigm where escalation can happen in milliseconds and attribution becomes a ghost in the machine.

Drawing on two decades of analyzing conflict in the digital domain, the pattern is clear: AI is not merely a new tool in the old cyber warfare toolbox. It is fundamentally altering the toolbox itself, introducing new classes of weapons, new strategies of engagement, and new, profound vulnerabilities that challenge our very conception of security and sovereignty.

From Human-Scale to Hyperscale: The New Tempo of Conflict

The most immediate and jarring change AI brings is one of sheer scale and speed, compressing the traditional “observe, orient, decide, act” (OODA) loop to near zero.

The End of the “Patch Window” and the Rise of Instant Exploitation

In traditional cyber conflict, a discovered software vulnerability created a race. Defenders had a “patch window”—days or weeks—to fix the flaw before it could be widely weaponized. AI, specifically machine learning models trained on code, has collapsed this window to hours or even minutes. Systems like OpenAI’s Codex or its malicious dark web equivalents can ingest a public vulnerability description (CVE) and automatically generate multiple functional exploit variants. This means a flaw can go from discovery to being used in a global, automated attack campaign before most system administrators have even read the morning’s threat bulletin. The defensive concept of “rapid response” is rendered obsolete by AI’s “instantaneous offense.”

Automated Reconnaissance and Target Mapping at Planetary Scale

Gone are the days of manually probing a few high-value targets. AI-powered bots can now continuously crawl the entire internet—every connected device, every public-facing server, every piece of software—to build a living, breathing map of global vulnerabilities. They don’t just find known weaknesses; they infer new ones by analyzing patterns across millions of systems. This allows a state actor to have a real-time inventory of potential attack vectors across an adversary’s entire digital economy, from power grids to personal devices, enabling strategic targeting on an unprecedented scale.

Swarm Tactics: The Autonomous Attack Fleet

The pinnacle of this scale is the AI swarm. Imagine not a single piece of malware, but a coordinated fleet of thousands of lightweight, autonomous agents. Some are scouts, probing defenses. Others are decoys, creating distracting noise. Specialized “breaker” agents attempt exploits, while “loader” agents deliver payloads. They communicate via covert channels, share intelligence on defensive measures, and adapt their collective strategy in real-time. Defending against this is not a matter of blocking one threat, but of surviving a coordinated, intelligent, and adaptive onslaught that can overwhelm human analysts through sheer complexity and volume.

The New Arsenal: AI-Specific Weapons of Digital Conflict

AI has given rise to entirely new classes of cyber weapons that target not just systems, but perception, trust, and societal stability itself.

Deepfakes and Synthetic Media as Weapons of Mass Deception

This is perhaps the most insidious change. AI-generated video, audio, and text are moving beyond fraud into the realm of information warfare and strategic influence. The fear is no longer of fake news, but of “proof”—fabricated evidence so credible it triggers real-world consequences.

-

Strategic Deepfakes: A fabricated video of a national leader declaring war or surrendering, released at a moment of crisis, could trigger panic, market collapse, or even accidental military retaliation.

-

Social Destabilization: AI can generate millions of unique, persuasive social media personas to artificially amplify division, spread disinformation tailored to specific demographic psychographics, and manipulate public opinion at a scale that makes past influence ops look primitive.

-

Erosion of Trust: When anything digital can be forged, the very foundation of shared reality—trust in video evidence, recorded orders, or official communications—crumbles. This creates a “liar’s dividend,” where real evidence can be dismissed as fake.

Adversarial Machine Learning: Poisoning the Well of Defense

This represents a meta-layer of warfare, attacking the AI systems that will increasingly be used for national defense.

-

Data Poisoning: An adversary subtly corrupts the training data of a rival’s AI-powered surveillance system, intrusion detection software, or target recognition algorithm. The model learns incorrectly, creating hidden blind spots. For instance, poisoning the data of an AI that monitors satellite imagery could make it systematically ignore certain types of vehicles or facilities.

-

Evasion Attacks: Crafting inputs that are specifically designed to fool a deployed AI model. A malicious drone could be painted with a pattern that causes an AI defense system to classify it as a bird. A cyber attack payload could be subtly altered to appear as benign network traffic to an AI security monitor.

AI-Enabled Infrastructure Attacks (The “Smart Grid” Turned Weapon)

As critical infrastructure—power grids, water systems, transportation networks—becomes “smarter” with AI-driven optimization and control, it also becomes more vulnerable. An AI attacker could:

-

Learn and Exploit Complex Systems: Use reinforcement learning to understand the unique operational patterns of a grid and execute a multi-vector attack that causes a cascading failure human hackers might not conceive of.

-

Launch Subcomponent Swarm Attacks: Target thousands of smart meters or IoT sensors simultaneously with AI agents, creating destabilizing feedback loops that physical engineers cannot diagnose or respond to in time.

The Strategic Landscape: Doctrine, Deterrence, and the Attribution Abyss

AI is forcing a complete rethink of the strategic principles governing cyber conflict.

The Collapse of Traditional Attribution

A cornerstone of deterrence and diplomatic response is knowing who to blame. AI obfuscates attribution ruthlessly. Attacks can be routed through a cascade of compromised systems in neutral countries. Malware can be designed to mimic the “signature” of another hacking group or nation-state (a “false flag” operation executed with algorithmic precision). The attacking AI itself can be designed to leave no consistent patterns. This creates a dangerous fog of war where retaliation becomes risky, and malicious actors can operate with a new sense of impunity.

The Challenge for Deterrence Theory

Nuclear deterrence relies on the certainty of a devastating, identifiable response. Cyber deterrence has always been murkier. AI makes it exponentially more so. How do you deter an attack that:

-

May be carried out by an autonomous system following rules set years prior?

-

Cannot be definitively attributed?

-

Could escalate from a minor probe to a crippling strike in milliseconds based on its own learned logic?

The old paradigms of mutual assured destruction (MAD) do not translate. We may be moving toward a doctrine of “Continuous Managed Conflict,” where low-level, AI-driven probing and skirmishing are constant, and the goal is resilience and advantage rather than definitive victory.

The Proliferation Problem and the Democratization of Power

Just as AI tools for defense are proliferating, so are offensive tools. What was once the exclusive domain of advanced nation-states is now accessible to smaller states, sophisticated criminal groups, and even well-funded ideological actors. AI-as-a-Service models on the dark web could rent out swarm attack capabilities or deepfake generation for political sabotage. This democratization lowers the barrier to entry for cyber warfare, making the battlefield more crowded and unpredictable.

Case Study: The “Midnight Vortex” Wargame (A Composite from Real Exercises)

A notional wargame conducted by a NATO-affiliated think tank illustrates the new paradigm. “Blue Team” (defender) had advanced, traditional cyber defenses. “Red Team” (attacker) was given access to an AI attack platform.

The Attack Unfolded in Three AI-Driven Waves:

-

The Noise Layer: A swarm of AI agents launched a DDoS attack and thousands of hyper-personalized phishing emails. This was not to breach, but to create overwhelming “noise” and trigger alert fatigue in Blue’s security center.

-

The Silent Probe: During the noise, a separate, lightweight RL agent entered through a compromised IoT device in a cafeteria smart fridge. It spent 48 hours learning network traffic patterns, specifically modeling the behavior of automated backup systems.

-

The Strategic Strike: The agent then executed its payload: it didn’t destroy data. Instead, it subtly, incrementally corrupted the integrity of archived financial and logistics data over six months. Shipment manifests were altered by 2%. Account balances were skewed by fractional percentages. The corruption was seeded into backup systems. By the time Blue Team discovered the issue, they couldn’t trust any data from the affected period, facing a crisis of institutional truth that was more paralyzing than a simple ransomware lockout.

The Lesson: The AI didn’t seek a dramatic knockout blow. It sought to erode the foundational trust in the organization’s own information, a strategic goal enabled by patience, adaptation, and learning.

The Human Element: The Evolving Role of the Cyber Warrior

In this new landscape, the role of the human operator is transformed from front-line soldier to strategic overseer and ethical governor.

From Analysts to AI Handlers and Interpreters

The job shifts from manually triaging alerts to training, managing, and interpreting the outputs of defensive AI systems. The critical skill becomes “prompt engineering for security”—knowing how to ask the right questions of an AI threat-hunting tool and understanding the biases and limitations in its conclusions.

The “Human-on-the-Loop” Imperative

Full autonomy in cyber warfare is a recipe for catastrophic and uncontrollable escalation. The strategic decision to escalate—to move from reconnaissance to disruption, or to counter-attack—must remain under human command. The role becomes “on-the-loop,” monitoring and setting bounds for AI agents, rather than “in-the-loop” for every micro-action. This creates a new pressure point: the speed of human decision-making versus the speed of AI-driven events.

The Moral and Ethical Quagmire

Who is responsible when an autonomous AI weapon violates international law? The programmer? The commander who deployed it? The state that owns it? AI obscures traditional chains of accountability. Furthermore, the use of deepfakes to manipulate populations or AI to cripple civilian infrastructure (like hospitals) presents severe ethical challenges that existing laws of armed conflict are ill-equipped to handle.

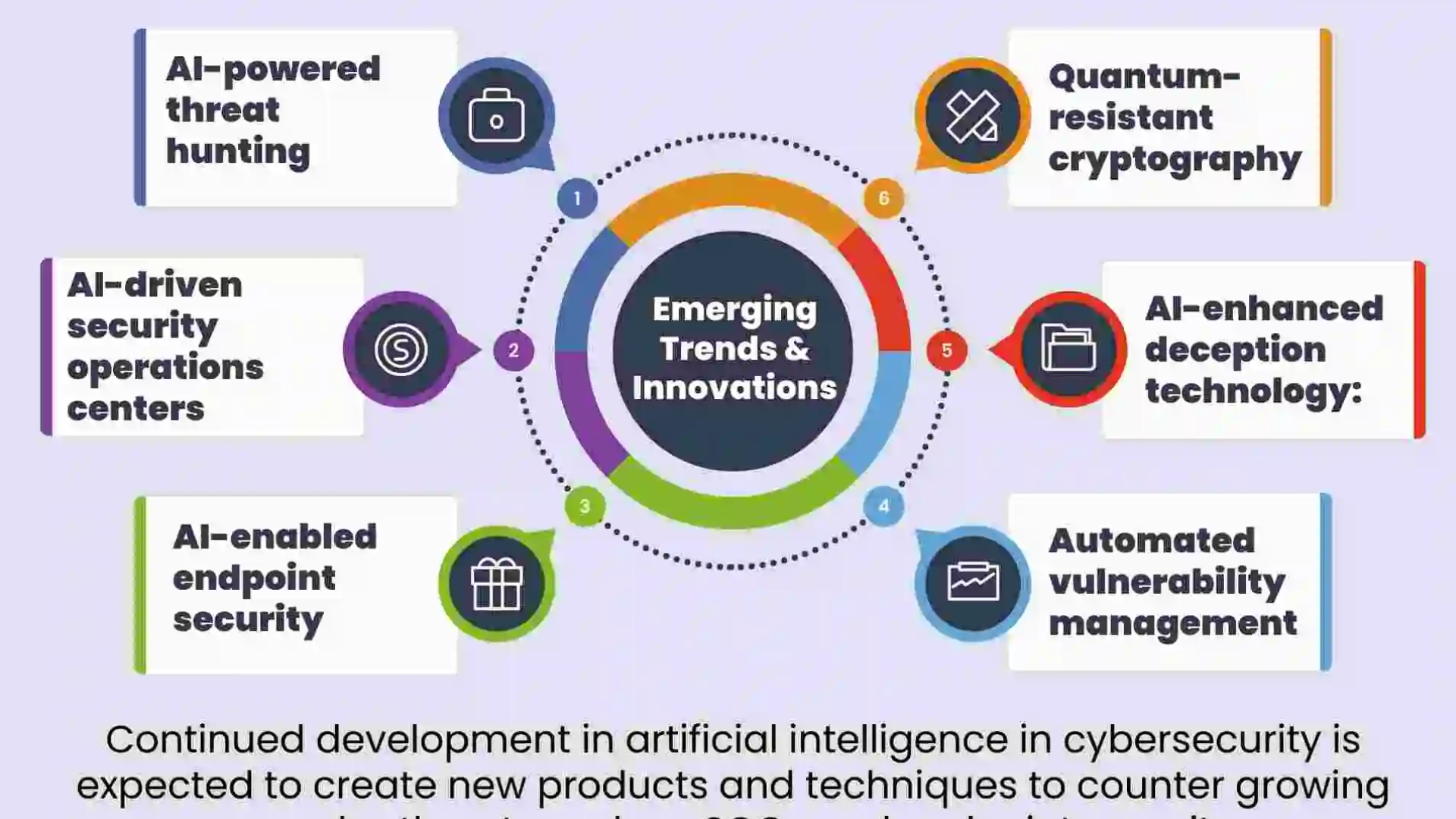

The Road Ahead: Building Resilience in the Age of AI Conflict

In the face of this transformation, a new security paradigm is required, built not on perfect defense, but on intelligent resilience.

1. Adopt Zero-Trust Architectives as a National Imperative: The model of “trust but verify” must be replaced with “never trust, always verify, assume breach” at the scale of critical national infrastructure. This limits the lateral movement of AI agents.

2. Invest in Defensive AI and Adversarial Training: We must fight AI with AI, but defensively. This includes developing systems trained on adversarial examples to resist poisoning and evasion, and using AI for behavioral analytics that can spot the subtle anomalies of another AI’s presence.

3. Develop New Norms and “Digital Geneva Conventions”: The international community must urgently grapple with norms. Potential treaties could ban the use of AI to attack certain civilian infrastructure (e.g., health care AI systems), prohibit the use of fully autonomous cyber weapons that escalate without human consent, and establish protocols for AI attribution and crisis communication.

4. Foster a Culture of Public and Institutional Resilience: Societies must be educated about deepfakes and information hygiene. Organizations must prioritize data integrity verification and disaster recovery that assumes sophisticated, persistent AI threats. The “human firewall” needs upgrading to an “AI-aware skeptic.”

5. Prioritize Explainable AI (XAI) for Defense: If we are to trust defensive AI and maintain human oversight, we must be able to understand their decisions. “Black box” AI is too dangerous for national security applications.

Artificial intelligence is not changing cyber warfare incrementally; it is conducting a root-and-branch revolution. It is moving conflict into a domain of speed, scale, and subtlety where human cognition alone cannot compete. The era of the AI-augmented nation-state is here, bringing with it a terrifying potential for destabilization but also a compelling imperative for innovation in defense, diplomacy, and ethics. The ultimate question is not whether AI will dominate cyber warfare—it already does—but whether humanity can develop the wisdom, oversight, and cooperative frameworks to prevent this powerful new tool from mastering its creators. The unseen battlefield is now algorithmic, and the fight for its control will define our collective security for decades to come.

10 Frequently Asked Questions (FAQs)

1. Has AI already been used in a real state-on-state cyber warfare attack?

While public attribution is always difficult, security analysts widely believe that leading cyber powers (the U.S., China, Russia, Israel, etc.) are actively researching, developing, and testing AI-driven tools in cyber operations. Elements like AI-enhanced reconnaissance, automated vulnerability discovery, and AI-powered social media influence campaigns are considered active and ongoing. A full-scale, publicly acknowledged autonomous AI cyber attack has not yet occurred, but the building blocks are actively deployed.

2. Can AI launch a cyber attack completely on its own, without human orders?

Technically, yes, it is possible to create an autonomous system with a pre-defined goal (e.g., “disable this network”). The profound fear among experts is not about a “rogue AI,” but about automated escalation. An AI instructed to “maintain access to this network” might interpret a defensive action as a threat and autonomously launch a more aggressive counter-attack to achieve its goal, sparking an unintended conflict spiral between two automated systems.

3. What is the single most dangerous application of AI in cyber warfare?

Many experts point to the convergence of AI-powered cyber attacks with physical infrastructure. An AI that can learn and exploit the unique, complex vulnerabilities of a smart power grid or financial trading system could cause kinetic, real-world damage (blackouts, market crashes) with unprecedented speed and sophistication, blurring the line between cyber and traditional warfare.

4. How can a country defend against AI swarm attacks?

Defense requires a layered, AI-augmented approach: Zero-Trust networks to limit swarm movement, AI-powered network behavioral analytics to detect the coordinated anomalous activity of a swarm, and defensive deception technology (AI-driven honeypots) to attract, engage, and study swarm behavior to develop countermeasures.

5. Will AI make cyber warfare more or less likely to lead to actual shooting wars?

It creates a dual effect. On one hand, AI’s attribution problems could make traditional retaliation less likely, perhaps encouraging more low-level conflict. On the other, the potential for rapid, catastrophic effects on civilian life (from an AI-driven infrastructure attack) or for strategic miscalculation by an automated system could create a “flashpoint” scenario that escalates unpredictably into traditional warfare.

6. Are there any international laws regulating AI in cyber warfare?

Currently, no specific treaties exist. The Tallinn Manual (a non-binding study) applies existing international law to cyber warfare, but AI poses new challenges. Discussions at the UN Group of Governmental Experts (GGE) are ongoing, but progress is slow due to geopolitical tensions and the dual-use nature of the technology.

7. What is “algorithmic warfare” and how is it different?

This term describes the next evolution: warfare where the primary target is not the physical asset or network, but the adversary’s decision-making algorithms themselves. This could mean poisoning the data of an enemy’s logistics AI to cripple supply chains, or manipulating the algorithmic models used for economic forecasting or military resource allocation.

8. Can open-source AI (like publicly available LLMs) be used in cyber warfare?

Absolutely. While state actors have classified models, open-source LLMs can be fine-tuned on malicious data to create effective phishing generators, basic exploit code writers, and disinformation engines. This dramatically lowers the barrier to entry for sophisticated information operations and cyber attacks.

9. What role do private tech companies play in this new arms race?

They are the armories. The core AI research and development is happening in companies like Google, Microsoft, OpenAI, and Baidu, as well as in a vibrant open-source community. Governments are both customers and regulators of this technology, creating a complex, tense relationship where private-sector innovation directly fuels national security capabilities.

10. As an individual or a business, what’s the most important thing to do?

Focus on resilience and verification. For businesses: implement Zero-Trust, use AI-powered behavioral security tools, and train staff on AI-generated threats like deepfakes. For individuals: practice extreme skepticism with digital media, use strong unique passwords and MFA, and understand that in the age of AI cyber warfare, your digital hygiene is part of your country’s collective defense.

Leave a Reply